Explorations on Multi-lingual Neural Machine Translation

- Orhan Firat | Middle East Technical University

Deep (recurrent) neural networks has been shown to successfully learn complex mappings between arbitrary length input and output sequences, within the effective framework of encoder-decoder networks. We investigate the extensions of this sequence to sequence models, to handle multiple sequences at the same time, within the same model. This reduces to the problem of multi-lingual machine translation (MLNMT), as we explore applicability and the benefits of MLNMT on, (1) large scale machine translation tasks, between all six languages of WMT’15 shared task, (2) low-resource language transfer problems, for Finnish, Uzbek and Turkish into English translation, (3) multi-source translation tasks where we have multi-way parallel text available, and (4) Zero-resource translation tasks where we don’t have any available bi-text between two languages. We will further discuss about the natural extensions of MLNMT model for system combination (of SMT and NMT models) and larger-context NMT (given the entire documents during translation).

-

-

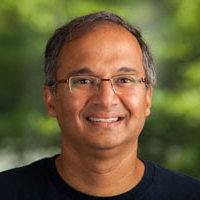

Arul Menezes

Partner Research Manager

-

-

Watch Next

-

-

-

-

-

-

-

Making Sentence Embeddings Robust to User-Generated Content

- Lydia Nishimwe

-

MSR Talk: Unsupervised Speech Reverberation Control with Diffusion Implicit Bridges

- Eloi Moliner,

- Hannes Gamper

-

Behind the label: Glimpses of data labelling labours for AI

- Srravya Chandhiramowuli

-

What ‘bhasha’ do you want to talk in? With Kalika Bali and Dr. Monojit Choudhury | Podcast

- Kalika Bali,

- Monojit Choudhury